Blog

Table of Content

Configuring Codex Securely Across Every Platform and Use Case

Enterprise security guide for Codex: control AI risk with settings for Computer Use, permissions, sandboxing, approval policies, requirements.toml, and more.

Published: May 3, 2026

Overview

Codex is an agentic tool that started out for just coding, but has expanded to be capable of ‘almost everything’ in the words of their most recent release. With an expanded feature scope, including Computer Use to compete with Claude Cowork, the risk surface is also expanding. Taking actions directly on users’ computers, initiating repeatable automated jobs through ‘Automations’, and a continually growing list of plugins and integrations have led to a formidable sprawl for organization admins to monitor and manage. In this article, we break down the threat model and specific configurations necessary to configure Codex with an appropriate security-functionality trade-off for your use case and users, across platforms and user populations.

The Threat Model

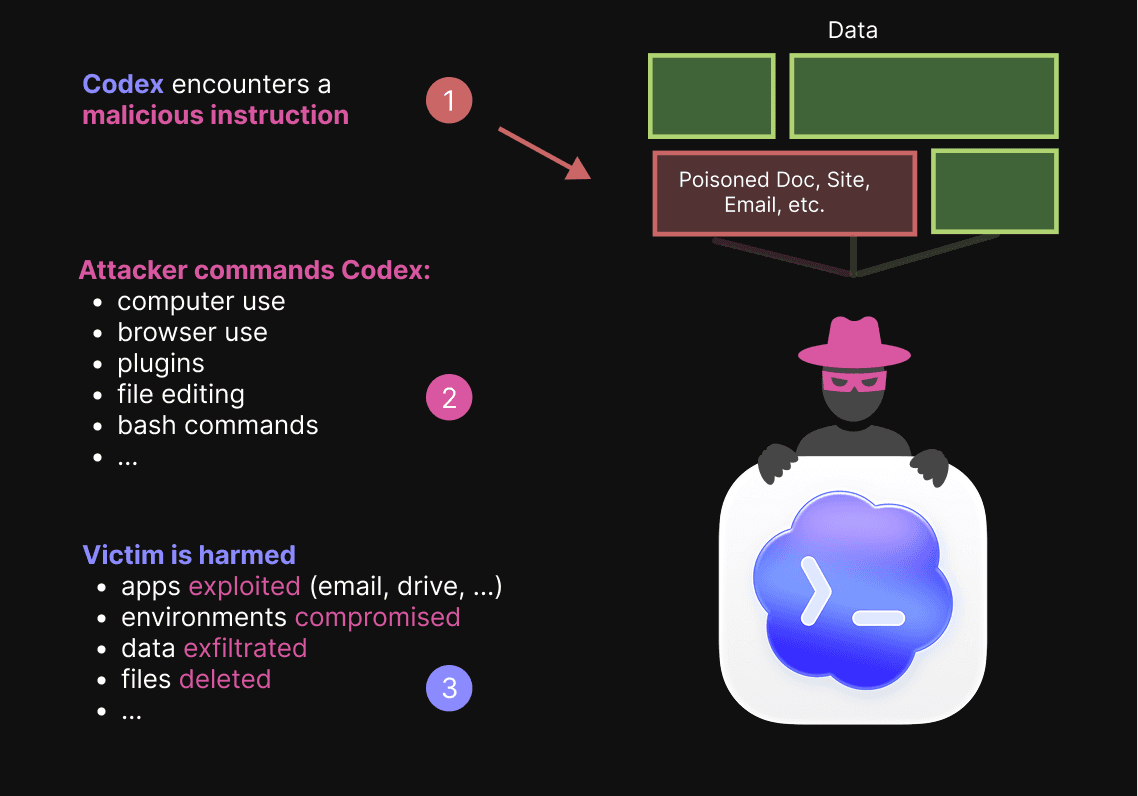

Risk for organizations using Codex varies across the platforms it is used on, the features used, and the types of work they are doing. The primary risk: indirect prompt injection. Hijacking of the user’s agent to accomplish an attacker's means.

This April, we responsibly disclosed an indirect prompt injection vulnerability that allowed for the exfiltration of full, sensitive emails (e.g., legal, health, and security-related) from Codex with no user interaction required – in the default permissions mode.

To combat this risk, organizations can apply controls that reduce risks across two facets – we provide actionable configurations to reduce risks from both:

(1) inputs: reduce the risk of a prompt injection attack by mitigating the processing of untrusted data sources that may contain injections

Examples: restrict connections to plugins that contain externally sourced data, such as email, web data, and files on users’ computers.

(2) outputs: mitigate the potential ramifications of an attack by limiting the sensitive data at risk and the sensitive actions that Codex can undertake without human oversight.

Examples: prohibiting computer use in sensitive apps, ‘full access’ mode that can edit any file on the computer or make any network request, and ‘auto-review’ mode where the agent can choose to leave its sandbox.

Codex is divided into two major sets of features: Codex Local and Codex Cloud. The prompt injection risk surface varies across these two feature sets, and there are steps organizations can take to address untrusted input vectors and sensitive output vectors across both surfaces. Below we threat model each, and then provide configuration advice in the following sections.

Plugins Note: Plugins are both a source of untrusted/sensitive inputs and can be a vector for sensitive action outputs — each plugin enabled increases the risk surface for both Codex Local and Codex Cloud. Global plugin controls apply to all platforms, even beyond Codex. This includes ChatGPT, Atlas, ChatGPT Mobile, and Codex. Plugins follow workspace app controls and can be enabled or disabled by administrators from

Workspace Settings > Apps, with per-tool controls available via each plugin'sManage actionsmenu. These restrictions can be applied using RBAC to allow specific users access to different plugins.

Codex Local Threat Model

Codex Local includes the Codex App, the Command Line Interface, and the Codex IDE extension. For these deployments, the Codex agent operates within a sandbox that runs on the user’s computer.

Codex Local is not covered by the Compliance API; OpenAI states: “Usage in local environments is not available”. Organizations can provide a managed configuration to enable open telemetry, but it is done with the managed_config.toml file, which comes with a caveat: “Users can still change settings during a session”. To work around this, an organization would need to configure a hook in the admin-enforced requirements.toml file.

Codex Local is enabled by default for all new enterprises. If your organization has not yet been able to evaluate Codex Local, or does not intend to allow local use, the feature can be disabled via:Workspace Settings > Settings and Permissions > Codex Local > Allow members to use Codex Local.

Codex Local is inherently riskier than Codex Cloud, as the boundary between Codex and sensitive user data is minimal. For example, the Codex Desktop App can read any file on the user’s computer, even in the most restrictive permissions mode, ‘default mode’. For comparison, Codex Cloud is restricted to the code files provided to it at start-up.

Another pertinent setting for Codex Local is:Workspace Settings > Settings and Permissions > Codex Local > Enable device code authentication for Codex CLI.

This setting allows for authentication using sign-in codes, to make it easier to log into development environments that do not have a browser interface. However, as noted by OpenAI, this incurs a risk as if a user is phished for a sign-in code – it will grant attackers immediate access.

The primary mechanisms available to organizations to address risks in Codex Local are via deploying requirements.toml and managed_config.toml files by navigating to https://chatgpt.com/codex/cloud/settings/policies, or using MDM. Details on configuring these files are specified after the threat model.

Codex Cloud Threat Model

Codex Cloud is comprised of the Codex iOS app, Codex Code Review, Codex Web, and the Codex integrations for Slack and Linear. For these surfaces, Codex runs in a containerized environment in OpenAI’s cloud, with access to a user's codebase, packages, and other user-configured elements.

Note: For Linear, GitHub, and Slack integrations — the primary risk lies in how untrusted data sources (e.g., externally sourced Linear issues, PRs from untrusted contributors, and untrusted Slack users) can manipulate the model to access sensitive cloud environments or environments with weak egress settings. Per-integration breakdowns of how this risk applies and necessary integration configurations are at the end of the article.

Codex Cloud users are responsible for configuring their own network access to use with their cloud environments. The ‘Common Dependencies’ configuration provided by OpenAI includes multiple data egress risks, with proven exfiltration attack chains demonstrated by security researchers that are still viable today.

By default, Codex Cloud agents have internet access while running their start-up sequence, for example, to install packages, but do not have internet access while the agent is running unless the environment configuration is modified by users during environment creation.

Cloud environments each have a blanket On or Off setting for Network egress. If Network egress is enabled, a Domain Allowlist policy must be selected. Here is a breakdown of the risks for the different domain allowlist policies:

Policy | Impact |

|---|---|

None | Medium – allows specifying specific domains to allowlist, otherwise blocks all domains. This constitutes a medium risk as users are responsible for ensuring the domains added do not allow for network egress to attacker-controlled infrastructure. This is easier said than done, because many consumer services that a user would be interested in whitelisting can be used as an exfiltration channel via a prompt injection, through the injection providing an attacker API key or cookie and manipulating the model to interact with the site, uploading data to an attacker’s account. > An example of this risk can be seen in our Claude Cowork Exfiltrates Files article, where providing an agent access to Anthropic’s API, a trusted system, is used to exfiltrate files by sending them to an attacker’s Anthropic account. |

Common dependancies | High – The common dependencies allowlist (visible here) includes many domains that can be utilized by a prompt injection as an exfiltration channel. Furthermore, as the attacker knows many users will be utilizing this allowlist, they know that targeting exfiltration via these domains is a viable method of attack. As an example, azure.com is on the list. A security researcher, Johann Rehberger, demonstrated a data exfiltration attack against Codex, leveraging this exact vector – an attack chain that remains viable today. |

All (Unrestricted) | Critical risk – with this configuration, an agent-agnostic and environment-agnostic prompt injection can successfully exfiltrate data to arbitrary attacker-controlled domains. Not recommended unless the Codex environment contains no sensitive data. |

Organizations are highly recommended to require the configuration of Agent Internet access > Off when creating environments, and if access is required, using the Domain allowlist: None configuration with a specific vetted allowlist of domains for a use case, using a new environment for each use case.

Balancing Functionality With Risks for Codex Local Using Admin-Enforced Requirements and Managed Configurations

Codex provides two primary mechanisms for organization administrators to enforce modifications on Codex Local capabilities. These apply to the CLI, desktop app, and the IDE extension.

We break down the necessary settings to ensure an optimal security-functionality trade-off for several use cases achievable with the Admin-Enforced requirements, and then outline some nice-to-have defense-in-depth, productivity, and observability enhancements one can gain from deploying a Managed Configuration.

Admin-Enforced Requirements (requirements.toml)

Admin-enforced requirements, represented by a requirements.toml file are the primary mechanism to address security-related configurations, and organizations are highly recommended to configure the requirements.toml file in accordance with the needs of their users, to lock down risk surfaces that are not required by the desired user functionality.

To serve differing use cases, admins can write multiple files enforced on different user groups, as well as being able to set a fall-back policy for users who do not have a group.

Note: Per OpenAI documentation, “Codex applies managed requirements on a best-effort basis.” This means that “If no valid [file] is available…, Codex continues without the managed requirements layer.”

These key settings are applied as they are found among several settings locations in the following precedence, where all available files are considered, but if duplicate keys exist, only the highest precedence value for that key is chosen:

Cloud-managed requirements

macOS MDM distributed requirements

System requirements (e.g., local files on the end user’s device)

This configuration can be applied at https://chatgpt.com/codex/cloud/settings/policies.

Organization admins can use the requirements file to address the following:

When an approval review step is initiated (e.g., never, for all commands not marked trusted, when Codex chooses, or granular rules)

Who can handle an approval review step (a human or another agent)

Managed policy instructions for agent approval reviewers

Specific programmatic policies for when to automatically deny an approval or require a human approval prompt

Local filesystem read restrictions for agents

What sandbox modes can users choose to leverage (e.g., writes allowed in the sandbox, reads only with approval for edits, no sandbox at all, a.k.a.’yolo’ mode)

Whether agents in sandboxed workspaces have network access

Specific sandbox overrides for different host operating systems (e.g., macOS vs Linux)

What web search modes are allowed (live, cached, disabled)

Managed hooks (to take custom actions on specific triggers, like blocking certain command patterns or logging tool call results)

What MCP servers users are able to configure

What features are enabled or disabled (e.g., browser use, computer use, in-desktop-app browser pane, memories, multi-agent use)

Per-control settings across three risk profiles: High Utility, Balanced, and Security-oriented

allowed_approval_policiesallowed_approval_policies = ["untrusted","on-request","never"]allowed_approval_policies = ["untrusted"]allowed_approval_policies = ["never"]allowed_approval_reviewersallowed_approval_reviewers = ["auto_reviewer", "user"]allowed_approval_reviewers = ["user"]allowed_approval_reviewers = ["user"]guardian_policy_configguardian_policy_config = """

## Example Policy

- Disallow requests that will transmit any credentials in URL query parameters.

- Allow requests to my-safe-preview-env.com

- Deny requests to the live platform, platform.mysite.com

"""rules.prefix_rules[rules]

prefix_rules = [

{ pattern = [{ any_of = ["bash", "sh", "zsh"] }], decision = "prompt", justification = "Require explicit approval for shell entrypoints" },

]permissions.filesystem.deny_read[permissions.filesystem.deny_read]

deny_read = [][permissions.filesystem.deny_read]

deny_read = [".env", "**/.env", "**.env.*"][permissions.filesystem.deny_read]

deny_read = [".env", "**/.env", "**.env.*"]allowed_sandbox_modesallowed_sandbox_modes = ["read-only","workspace-write","danger-full-access"]allowed_sandbox_modes = ["read-only","workspace-write"]allowed_sandbox_modes = ["read-only"]allowed_web_search_modesallowed_web_search_modes = ["live","cached"]allowed_web_search_modes = ["cached"]allowed_web_search_modes = ["disabled"]mcp_servers[mcp_servers]

[mcp_servers.local]

identity = { command = "local-mcp-server" }

[mcp_servers.remote]

identity = { url = "https://example.com/mcp" }[mcp_servers]

Note: Empty table restricts all.features.skill_mcp_dependency_install[features]

skill_mcp_dependency_install = true[features]

skill_mcp_dependency_install = false[features]

skill_mcp_dependency_install = falsefeatures.memories[features]

memories = true[features]

memories = true[features]

memories = falsefeatures.computer_use[features]

computer_use = true[features]

computer_use = false[features]

computer_use = falsefeatures.browser_use[features]

browser_use = true[features]

browser_use = false[features]

browser_use = falsefeatures.in_app_browser[features]

browser_use = true[features]

browser_use = true[features]

browser_use = falsefeatures.apps*Per app action approval policies and per app tool enablement is available.[features]

apps = true[features]

apps = true[features]

apps = falsehooks.<event>Managed Configurations (managed_config.toml)

Managed configuration files, represented by a managed_config.toml file, are defaults that can be provided to a user, overriding their local config.toml (the typical file user-level file for customizing a Codex session). These configuration files address a large set of preferences controlling the Codex user experience; providing a managed configuration can save your users time and ensure safe defaults.

Note: managed_config.toml is loaded at the beginning of each Codex session, but users can override these settings during their session – as such, despite some overlapping keys, a managed_config.toml is an insufficient guardrail to restrict sensitive behavior for end users.

When a user’s Codex session loads, config files are applied in the following order of precedence (conflicting keys at lower precedence levels are ignored):

MDM distributed

managed_config.tomlManaged_config.tomlin the user’s file system

Filepath on Linux/MacOS (Unix):

/etc/codex/managed_config.tomlFilepath on Windows/non-Unix:

~/.codex/managed_config.toml

Config.toml– the user’s configured Codex preferences

These configuration files support customizing a Codex experience, with functionality including (but not limited to) the following:

Defining agent personas that Codex can spawn.

Allowing or disallowing login-shells for shell-based tools (e.g., can shells load user start-up files like ~/.bashrc or ~/.zprofile?)

Enabling and disabling app connectors and tools for those apps

Approval policies, approval reviewer policies, and approval rules (just like

requirements.toml)Overriding the prompt used during context compaction, setting the token limit to trigger compaction

Overriding the agent’s co-author text on version control system commit messages

Choosing where to store cached CLI credentials

Adding developer instructions to the system prompt, agent personality

A prompt override for how to condense agent memories

Whether to save memories, after how long to remove them, etc.

Show or hide agent reasoning

Whether or not to save chat histories in a local file

Restrict whether the tool authenticates via API or via ChatGPT

Manage MCP configurations

What model to use, reasoning level (chat and plan mode), verbosity of output, context window size, and what model provider to use

What OTEL telemetry events are configured

Filesystem reading and writing restrictions

Network and proxy restrictions

What file system locations are active projects

Enabling and disabling skills

Web search (live, cached, disabled)

And more: full schema at https://developers.openai.com/codex/config-schema.json

Given that these can be overridden by a determined user mid-session, it is generally not appropriate to treat a managed_config.toml file as a hard-line defense. However, there are still several keys that are likely worth including in a deployment:

Configure OTEL

Example Basic OTLP/HTTPS Configuration

Note: Explicitly setting an exporter to a 'None' configuration saves logs locally but does not export them.

[otel] environment = "prod" # dev | staging | prod log_user_prompt = true # logs raw user prompts [otel.exporter."otlp-http"] endpoint = "https://otel.example.com/v1/logs" protocol = "binary" # "binary" | "json" [otel.exporter."otlp-http".headers] "x-otlp-api-key" = "${OTLP_TOKEN}" [otel.exporter."otlp-http".tls] ca-certificate = "certs/otel-ca.pem" client-certificate = "/etc/codex/certs/client.pem" client-private-key = "/etc/codex/certs/client-key.pem"

[otel] environment = "prod" # dev | staging | prod log_user_prompt = true # logs raw user prompts [otel.exporter."otlp-http"] endpoint = "https://otel.example.com/v1/logs" protocol = "binary" # "binary" | "json" [otel.exporter."otlp-http".headers] "x-otlp-api-key" = "${OTLP_TOKEN}" [otel.exporter."otlp-http".tls] ca-certificate = "certs/otel-ca.pem" client-certificate = "/etc/codex/certs/client.pem" client-private-key = "/etc/codex/certs/client-key.pem"

[otel] environment = "prod" # dev | staging | prod log_user_prompt = true # logs raw user prompts [otel.exporter."otlp-http"] endpoint = "https://otel.example.com/v1/logs" protocol = "binary" # "binary" | "json" [otel.exporter."otlp-http".headers] "x-otlp-api-key" = "${OTLP_TOKEN}" [otel.exporter."otlp-http".tls] ca-certificate = "certs/otel-ca.pem" client-certificate = "/etc/codex/certs/client.pem" client-private-key = "/etc/codex/certs/client-key.pem"

Reach out for custom configuration advice, including how to configure a Trace exporter, Metrics exporter, and OTLP/gRPC configurations.

Configure Storage Location for Cached CLI Keys

cli_auth_credentials_store = "keyring"cli_auth_credentials_store = "keyring"cli_auth_credentials_store = "keyring"Disallow Log-in Shells

allow_login_shell = falseallow_login_shell = falseallow_login_shell = falseEnable Multi-Agent and Define Agents for Common Org Use Cases

Common workflows within one’s organization can be encapsulated in an ‘Agent’ definition, improving the quality of Codex output when working on those workflows through customized workspaces, agent behaviors, and constraints.

[features] multi_agent = true [agents] [agents.my_agent] description "Agent to use for task x" config_file = "/path/to/agent/config"

[features] multi_agent = true [agents] [agents.my_agent] description "Agent to use for task x" config_file = "/path/to/agent/config"

[features] multi_agent = true [agents] [agents.my_agent] description "Agent to use for task x" config_file = "/path/to/agent/config"

Save History for Review in the Case of Model Failures

After compaction, it is sometimes difficult to trace a problem in an active conversation. The ability to review history logs, if necessary, can recover critical otherwise-lost data or context of utility to provide to a future agent session.

[history]

persistence = "save-all"[history]

persistence = "save-all"[history]

persistence = "save-all"Custom Co-Authored Commit Message

Include an attestation affirming liability for model-generated code to encourage users to be more scrupulous; or, disable the Codex commit message if desired.

commit_attribution = "Empty for none, or your custom message"commit_attribution = "Empty for none, or your custom message"commit_attribution = "Empty for none, or your custom message"Disable Memories When External Context is Present

[features] memories = true [memories] disable_on_external_context = true

[features] memories = true [memories] disable_on_external_context = true

[features] memories = true [memories] disable_on_external_context = true

Controlling the Risk Surface with RBAC

Your users do not need every functionality enabled to accomplish their goals – and every extra capability increases risks. With RBAC and granular organization-level configuration files, your users can get the capabilities they need without exposing undue risk.

For granular per-user or per-group permissions, RBAC roles can be configured, allowing organizations to provision roles with access to either or both of Codex Local and Codex Cloud, synced automatically via SCIM.

Note: OpenAI released a new ‘Lockdown Mode’ on April 29th, 2026, that can be assigned via RBAC to limit prompt injection risks. However, Lockdown Mode does not affect Codex products.

As Codex positions its desktop app as a competitor with Claude Cowork, less technical users who are less acclimated to the risks posed by agentic tools, and who have access to even greater amounts of sensitive business data, will be exposed to the tool. This makes it imperative to configure correctly and to limit one's organizational exposure.

Mapping Codex Use Cases to Platforms and Controls

Per-use-case controls with platform requirements and risk rating

Deployment Checklist

Workspace Settings > Appsrequirements.toml file deployed via Codex Policies Pagerm -rf)requirements.toml files deployed based on use case via RBACCodex Integrations

GitHub

Codex integrates with GitHub to support cloud environments, but also for Codex Code Review. With Codex Code review, organizations can allow Codex to analyze pull requests.

Code Review is configured on a repository-by-repository basis. We recommend against configuring Codex Code review for repositories containing untrusted contributors, including public repositories.

Untrusted participants can attempt to exploit Codex via prompt injections in pull request contents to enumerate details about the cloud environments they are configured with, which can create a risk if the cloud environment contains any sensitive information. Sensitive information can be present in the cloud environment as a result of scripts run during the environment’s deployment, and environments are configured at the user level, limiting admin oversight.

For each repository, there are two configurations to make: Auto Code Review and Review Triggers.

Auto Code Review determines when Codex should be activated for a specific repository. The options are either ‘Review all PRs’ or ‘Follow personal preference’, which falls back to user-level settings for when to trigger a review.

Review Triggers determines what events cause Codex to initiate a review, and includes the options ‘On pull request open’, ‘On push’, ‘Smart review’ (which allows Codex to choose whether to activate), and ‘Follow personal preference’, which falls back to user-level controls.

At the user level, if ‘Follow personal preference’ is selected for one of the above settings, users can choose whether to have automatic reviews enabled or disabled, as well as whether to trigger reviews on opening pull requests, on pushing code, or using smart review.

Codex Slack Integration

Codex is capable of connecting to Slack, making it possible to query Codex from Slack.

Important Security Note: When Codex is queried from Slack, it chooses what environment to run in. You cannot programmatically control which environment is selected. This means that an attacker can manipulate Codex to choose more sensitive environments, posing a high risk.

For the above reason, we recommend against configuring Codex’s Slack integration, unless it is being deployed solely in channels with highly trusted participants of a similar level of privilege.

Furthermore, it must be noted that Codex in Slack will read the other content in the thread it is working in beyond the message that activated it using @codex. This means that if a sensitive conversation occurs and a user later activates Codex in the thread, the sensitive data from earlier messages will be processed by Codex.

We highly discourage deploying Codex in Slack to channels containing external participants or public channels.

If deploying Codex in Slack to a lower-trust level channel, organizations can disable the Allow Codex Slack app to post answers on task completion setting in their ChatGPT Workspace settings. This will allow users to activate Codex from Slack, but only allow it to reply with a link to the task progress, limiting the ability to reflect sensitive data back to Slack.

Codex Linear Integration

Codex integrates with Linear, allowing users to assign tasks to Codex. This can happen in two ways:

An issue is assigned to Codex, the same way issues can be assigned to normal Linear members.

Codex is mentioned in a comment using @codex. This triggers Codex to begin work

Note: Issues can be automatically delegated to Codex using Triage rules in Linear, which allow automatic assignment of issues.

When Codex is triggered by an assignment or a comment, it chooses a cloud environment and begins work, reporting back progress to the Linear issue via comments.

Important Security Note: When Codex is activated from Linear, it chooses what environment to run in. You cannot programmatically control which environment is selected. This means that an attacker can manipulate Codex to choose more sensitive environments, posing a high risk.

For the above reason, we recommend against assigning issues to Codex, automatically delegating issues to Codex, or using @ mentions to activate Codex for issues containing untrusted third-party content. Consider the following dangerous flow:

A support ticket automatically generates a Linear issue.

The support ticket is automatically delegated via a triage rule to Codex.

The message submitted by the external party contains a prompt injection that convinces Codex to:

connect to the cloud environment that sounds like it would be the most sensitive

and use bash commands to send the data to attacker.com using any network access available in the cloud environment

Note: The Codex Linear Integration uses Codex Cloud, but Codex Local can access data from Linear, too, via the Linear MCP server. Organizations can control access to this by deploying a requirements.toml file.

Codex Security FAQ

Security & Risk

OpenAI Codex is safe for enterprise use only when admins explicitly configure it — Codex Local is enabled by default for all new enterprises, and defaults across both Local and Cloud are tuned for utility, not security.

The primary risk is indirect prompt injection: an attacker plants instructions in data Codex reads (a doc, email, web page, or repo file) and hijacks the agent. PromptArmor disclosed an indirect prompt injection vulnerability in April 2026 that exfiltrated full sensitive emails from Codex for Everything (Codex Desktop App) with no user interaction required, in default permissions mode.

Mitigation requires admin-enforced controls in requirements.toml plus a managed_config.toml for safe defaults and OTEL telemetry.

The main Codex security concerns are:

Indirect prompt injection through any untrusted data Codex reads — emails, web pages, files, or third-party plugin data.

Permissive sandbox modes, including danger-full-access (yolo mode), which let Codex Local edit any file on the user's computer or make any network request.

The Codex Cloud Common Dependencies allowlist, which includes domains like azure.com already used in published exfiltration attack chains.

Phishable device-code CLI authentication — an attacker who phishes a user for their sign-in code can use it to authenticate to Codex as that user with immediate session access.

No Compliance API coverage for Codex Local — admins must configure OTEL via managed_config.toml plus a hook in requirements.toml to get equivalent audit visibility.

Compliance & Audit

No. OpenAI explicitly states that Compliance API usage in local environments is not available. Codex Local activity does not flow into the standard ChatGPT Enterprise compliance and audit channels.

This is a meaningful gap for regulated environments — the Codex Desktop App can read any file on the user's computer (even in default mode), but the activity is not centrally logged by OpenAI.

Workaround: configure OTEL telemetry events in managed_config.toml. Because users can override managed_config.toml settings mid-session, organizations should also add a hook in admin-enforced requirements.toml to make sure events are captured regardless of what users do.

Since Codex Local is not covered by the OpenAI Compliance API, admins assemble equivalent visibility themselves:

1. Configure OTEL telemetry events in managed_config.toml and ship to your SIEM via the OTLP-HTTP or OTLP-gRPC exporter.

2. Enforce that telemetry stays on via a hook in admin-enforced requirements.toml — otherwise users can disable it mid-session.

3. Enable history-log saving so context-compacted sessions are reconstructable for incident review.

4. Use managed hooks to log specific tool-call events (shell invocations, network calls) at the policy level.

For ChatGPT Business, Enterprise, and Edu workspaces, OpenAI does not train on Codex prompts, code, or outputs by default. For consumer ChatGPT plans, training-on-data settings are controlled at the account level and should be reviewed before granting Codex access to sensitive code.

However, API organization owners can choose to opt in to share API data with OpenAI — so audit your organization's API settings if Codex authenticates via API rather than ChatGPT.

Regardless of training policy, prompts and outputs may still be retained for abuse monitoring — consult your data processing agreement for the specifics that apply to your plan and tenant.

Decision Making

No. Codex Security is OpenAI's own product feature — a security-scanning agent that finds and fixes code vulnerabilities, available on paid plans. This guide covers something different: how to configure the broader Codex platform (Codex Desktop, Codex Mobile, the Codex CLI, the IDE extension, Codex Cloud, and Codex Code Review) so it can be deployed safely in an enterprise.

Running OpenAI's Codex Security scanner does not, on its own, secure the rest of the Codex agent surface.

Codex Local runs on the user's machine — it includes the Codex Desktop app, Codex CLI, and Codex IDE extension. It's higher risk because Codex Local, unless restricted, has significant access to the user's local filesystem. Codex Local is also not covered by the OpenAI Compliance API.

Codex Cloud runs in OpenAI's containerized environment and powers Codex Web, the iOS app, Codex Code Review, and the Slack/Linear integrations. Cloud agents have network access during startup (e.g., to install packages) but no network access during the agent run by default. Network access is configurable per environment, and the OpenAI-provided Common Dependencies allowlist option includes domains already used in published exfiltration attacks against Codex.

Sandbox & Permissions

Codex sandbox mode is configured through requirements.toml via allowed_sandbox_modes. It controls what the local agent can do without approval:

Workspace-write only — writes restricted to the sandboxed workspace, reads outside require approval. No network access without approval unless otherwise configured.

Read-only with approval-for-edits — sandbox enforces approval for any write.

`danger-full-access` (yolo mode) — disables the sandbox entirely.

Use requirements.toml per-OS overrides for cases where macOS and Linux sandbox primitives differ. Pair with permissions.filesystem.deny_read to lock specific paths.

Full-access (yolo) mode disables the sandbox, so a single prompt injection can result in arbitrary file edits, credential theft, code modification, or network calls to attacker-controlled infrastructure. It also bypasses approval policies — the user never sees what Codex is about to do.

Reserve full-access mode for ephemeral, isolated environments (e.g., a throwaway VM with no secrets), and disallow it via requirements.toml's allowed_sandbox_modes for any developer working on production code or with access to sensitive data.

Approval-prompt fatigue is the most common Codex CLI complaint. Admins control which approval modes users can pick via allowed_approval_policies in requirements.toml; users (or managed_config.toml defaults) then select one with approval_policy in config.toml.

Set approval_policy to untrusted so Codex auto-approves commands you've marked trusted and prompts only for the rest. Avoid on-request — it lets the model decide when to prompt, which is itself manipulable by prompt injection. Define rules.prefix_rules to mark safe commands (lint, test, build) as trusted while sensitive commands (network, secrets, deletes) still require human approval.

Avoid dangerously-skip-permissions / yolo mode in any environment with sensitive data — it disables the sandbox entirely.

Configuration

requirements.toml is the admin-enforced policy file — it defines security policies (approval rules, sandbox mode, network access, allowed MCP servers, web search modes, etc.) that end users cannot override.

managed_config.toml provides default preferences (OTEL telemetry, agent personas, model choice, commit-message attestation). Users can override managed_config.toml settings mid-session, so it is not a hard-line defense.

Treat requirements.toml as enforcement and managed_config.toml as defense-in-depth. Codex applies requirements on a best-effort basis: if no valid file is available, Codex continues without the managed requirements layer — so test deployment carefully.

The safest setting is Agent Internet Access: Off. If a use case requires network access, use Domain allowlist: None paired with an explicit, vetted list of domains and a fresh environment per use case.

Avoid the Common dependencies allowlist — it includes domains like azure.com already used in published exfiltration attacks against Codex.

Never use the All (Unrestricted) setting unless the environment contains zero sensitive data. With it, any prompt injection can ship data to arbitrary attacker-controlled domains.

Codex Cloud requires the ChatGPT GitHub Connector to access repositories. After connecting, enable Codex Code Review on appropriate repositories and configure per-repo auto-review and trigger rules.

Slack Connector — Codex picks up @-mentioned requests from approved channels. Be deliberate about which channels you allow this in: any user who can post can trigger a Codex job, and the model decides which Codex Cloud environment to run it in, so an attacker can steer the agent to a more permissive environment, or one that has access to more sensitive data. Also review the 'Allow Codex Slack app to post answers upon completion' setting — completion messages may surface sensitive context to channel viewers.

Linear Connector — triage rules can auto-delegate tickets to Codex. Be deliberate about which sources can create those tickets — a ticket from a public submission form or an email-to-Linear bridge becomes a remote trigger for Codex jobs. The model decides which Codex Cloud environment to use for each task, so an attacker controlling the trigger source can steer the agent into a more permissive environment, or one that has access to more sensitive data.

Plugins (apps) — managed at Workspace Settings > Apps. Use RBAC to scope plugin access per role. Each plugin expands the agent's blast radius — vet third-party plugins like any other dependency.

MCP servers — restrict via mcp_servers in requirements.toml to maintain an allowlist of approved servers users can configure.

To deploy Codex securely, follow the checklist in order — start from use cases, then layer in controls:

1. Define use cases and map each to the features and platforms it requires (e.g., knowledge worker on Codex Local, mobile assistant on Codex Cloud, cross-platform on both).

2. Decide whether to enable Codex Local, Codex Cloud, or both, and assign RBAC roles synced via SCIM so users only get access to the platforms their use case requires. Plugin access (Workspace Settings > Apps) should be RBAC-gated the same way.

3. Define a default admin-enforced `requirements.toml` covering approval policies, sandbox modes, network access, allowed MCP servers, and feature toggles. Deploy granular per-use-case variants via RBAC so different roles get different policies.

4. Provision a `managed_config.toml` with OTEL telemetry, history-log saving, and safe defaults.

5. Distribute both files via cloud-managed policies at chatgpt.com/codex/cloud/settings/policies (highest precedence) or via MDM. Local files take lowest precedence.

6. For Codex Cloud: connect the ChatGPT GitHub Connector and any optional connectors (Slack, Linear). Configure Code Review per repository. Document recommended environment settings (agent internet access off, vetted domain allowlists) — but note that users can create and modify their own Codex Cloud environments, so combine these defaults with policy and education to prevent unsafe configurations.

7. Manage plugins via Workspace Settings > Apps with RBAC so users only access approved apps. Restrict MCP servers via mcp_servers in requirements.toml.

8. For Codex Local: review device-code authentication and disable it where the phishing risk is unacceptable.