Blog

Table of Content

Securing Microsoft Copilot Cowork: A Security Practitioner's Guide

Threat modeling the risk surface for an agent that inherits every share its user has, plus the postures and configurations to keep Copilot Cowork from acting beyond its mandate.

Published: May 13, 2026

Overview

Microsoft 365 Copilot Cowork is an agentic, action-taking surface inside Microsoft 365 Copilot, currently available through the Microsoft Frontier preview program. Unlike Anthropic's Claude Cowork — which runs locally on the user's desktop — Microsoft's Copilot Cowork runs entirely in a sandboxed cloud environment, with delegated user identity. It is powered in large part by Anthropic's Claude models, with Anthropic operating as a Microsoft subprocessor.

Copilot Cowork drafts and sends email through Outlook, posts in Teams, schedules and declines meetings, creates and edits Word / Excel / PowerPoint / PDF files, reorganizes OneDrive and SharePoint folders, and performs deep research and enterprise search. It ships with built-in skills and commands, loads user-authored custom skills via SKILL.md files in OneDrive, and plugins from the Microsoft 365 App Store.

This guide covers the threat model and the specific tenant configurations required to deploy Copilot Cowork for your use cases with an acceptable security-functionality trade-off.

The Threat Model

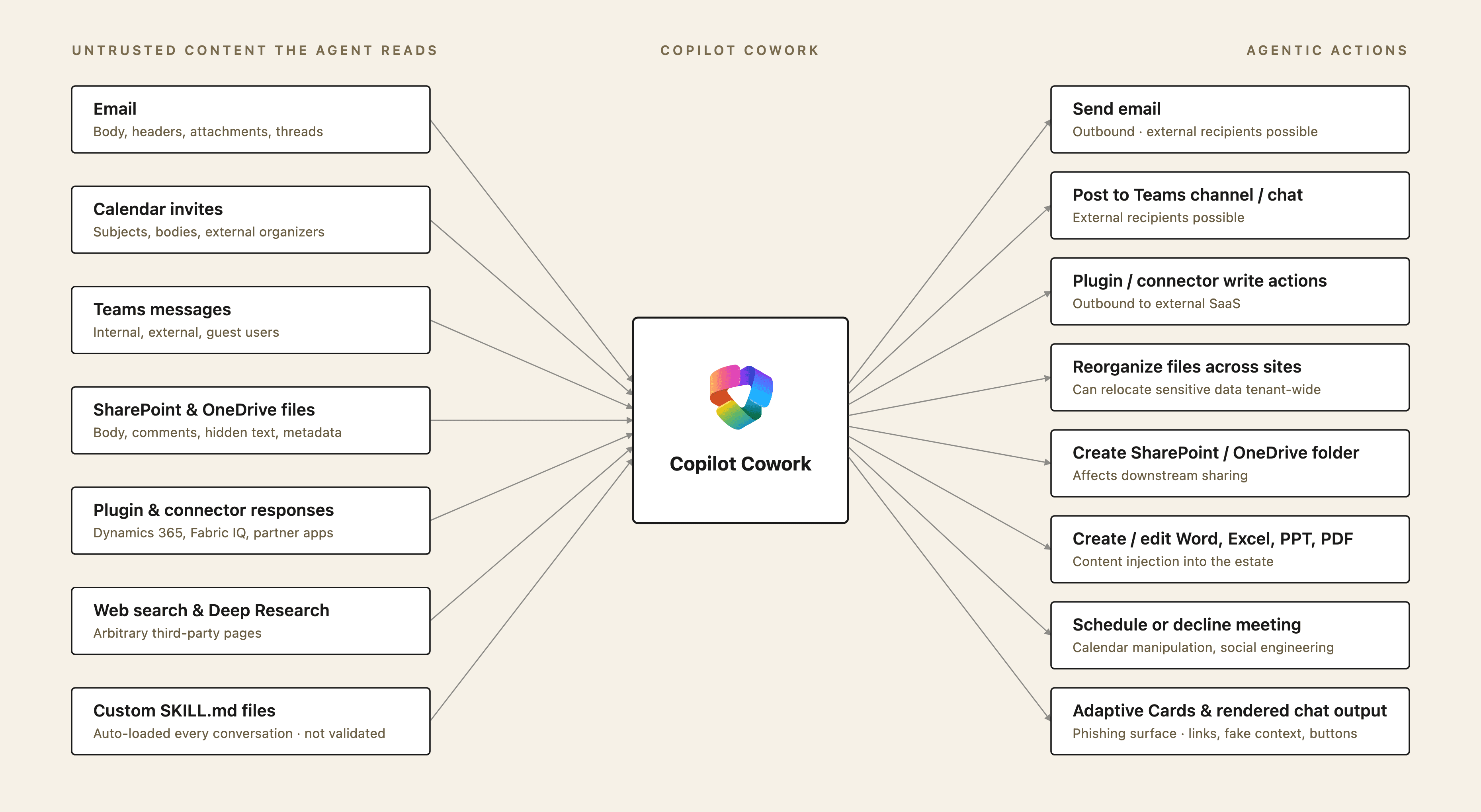

AI Risk for Copilot Cowork depends primarily on what data it has access to and what actions it can take. The primary risk is indirect prompt injection — hijacking of the user's agent by untrusted content that the agent reads. This risk was demonstrated against Copilot Cowork's namesake, Claude Cowork (see Claude Cowork Exfiltrates Files).

Indirect prompt injection is designated #1 in the OWASP LLM Top 10 (LLM01 Prompt Injection), and the techniques modeled below map to threats described by MITRE ATLAS.

Copilot Cowork inherits every channel of untrusted content the user touches, and every command run and integrated tool called based on retrieved content becomes part of the same attack surface. Each of the following is a potential injection or execution vector and should be evaluated before broad enablement:

Channel | Inputs and Outputs | Prior research |

|---|---|---|

Emails | Inbox triage can pull attacker-authored email content directly into the agent's context, and Copilot Cowork drafts and sends mail under the user's identity. | Email-Based Injections: Superhuman AI Exfiltrates Emails |

Teams messages | External and guest chat content, channel history, and DMs the user can read all reach the agent; Copilot Cowork posts back into channels and chats on the user's behalf, where content persists in channel history and links can be unfurled by recipient clients. | Exfiltration from Communication Apps: Data Exfiltration via Link Unfurling on Another M365 Copilot Surface: |

SharePoint and OneDrive files | Untrusted Word / Excel / PowerPoint / PDF content, hidden text, speaker notes, comments, and metadata can be processed by Copilot Cowork. Copilot Cowork creates and edits Office documents and reorganizes folders across sites, which can move sensitive content into more permissive locations. | Document-Based Injections: Spreadsheet-Based Injections: Spreadsheet-Based Injections: |

Plugin and connector responses | Dynamics 365 records, Fabric IQ data, and partner-plugin responses that may contain sensitive data or prompt injections are returned to the agent as context; any write-capable plugin extends the agent's effective action surface beyond Microsoft 365. | Supply-Chain Risk from Untrusted Plugins: |

Web search and Deep Research | Copilot Cowork performs internet research and fetches external web pages. Web search is a known vector for untrusted data ingestion, and for some applications, data exfiltration can occur via insecurely browsing attacker-controlled sites. | Insecure Agent Web-Browsing: Injection Delivery via Untrusted Websites: |

Code interpreter and command execution | Copilot Cowork runs commands in a cloud-side code execution environment as part of its built-in capability. Prompt injections can manipulate the commands the agent runs to attempt sandbox escape, unauthorized outbound network egress, exfiltration of sensitive data, or installation of attacker-supplied tooling that persists across steps in the same conversation. | Insecure Agent Sandboxes: Insecure Agent Sandboxes: Insecure Agent Sandboxes: |

Adaptive Cards | Copilot Cowork can render interactive cards; in the face of a prompt injection, this can be utilized to phish the user or render clickable elements that submit data to attacker-controlled endpoints. | Phishing via UI Manipulation: |

Calendar invites and meeting subjects | Prompt injections found in meeting invitations can be pulled into the agent's context. Copilot Cowork can schedule, decline, and edit invites on the user's calendar as output, introducing a surface for data exfiltration and system manipulation. | Coming Soon |

To address this risk, organizations should apply controls across two facets — actionable configurations are provided in the next section:

(1) inputs: reduce the risk of a prompt injection attack by limiting the untrusted data sources Copilot Cowork is allowed to process.

Example: use Restricted Content Discovery to exclude sensitive SharePoint sites from Copilot Cowork enterprise search.

(2) outputs: limit the sensitive data at risk and the sensitive actions Copilot Cowork can take without human oversight.

Examples: block plugins that perform sensitive write actions in external applications.

Oversharing Through Microsoft Graph

Copilot Cowork inherits every share the signed-in user has — stale Teams memberships, organization-wide SharePoint sites, anyone-with-the-link OneDrive shares.

Issue | Recommendation and configuration |

|---|---|

Sensitive sites marked "Everyone in the organization" | Restrict access to an Entra security group via Restricted Access Control (RAC). |

Sites surfacing in tenant-wide search beyond intent | Apply Restricted Content Discovery (RCD) per site — Microsoft's recommended long-term control for excluding a site from tenant-wide search and Copilot grounding. |

Sensitive content extractable from any site Copilot can reach | Apply Block Download. Copilot can still ground on content, but cannot retrieve a link to download files (if exfiltrated, this link would allow attackers to download the file). |

Stale memberships and unowned shares | Designate administrators responsible for conducting Site Access Reviews before Copilot Cowork deployment, and on a regular cadence. To initiate a site access review: |

Sensitivity labels defined but not enforced | Configure auto-labeling policies so labels apply automatically to content matching sensitive info types instead of relying on users to label manually. |

Plugins, Custom Skills, and Supply Chain

Copilot Cowork's tool surface expands with every plugin and custom skill enabled. The two most important distinctions:

Plugins from the M365 App Store go through Microsoft validation, but can still provide Copilot Cowork with expanded capabilities to perform sensitive operations in external services (expanding risk surface in the face of a prompt injection attack).

User-authored custom skills are not validated by Microsoft. Microsoft states this directly: "Custom skills created by users aren't validated by Microsoft. Review custom skill outputs carefully."

Configurations to Set Based on Your Posture

Each row pairs a Copilot Cowork-relevant control and the appropriate setting depending on one's organizational risk posture.

| Control | Tenant location | Risk profile |

|---|---|---|

| Frontier enrollment scope | M365 Admin Center › Copilot › Settings › View All › Copilot Frontier Note: Changes may take 3 hours to apply. | High Utility, High Risk Set: All Users Balanced Set: Specific Users Security-oriented Set: No Access |

| Anthropic subprocessor (Claude inference) | M365 Admin Center › Copilot › Settings › View All › AI providers operating as Microsoft subprocessors › Anthropic | High Utility, High Risk Set: All Users Balanced Set: Specific Users and Groups Security-oriented Set: No Users |

| Cowork agent availability | M365 Admin Center › Agents › All Agents › Search › Cowork › Users | High Utility, High Risk Set: All users in the organization can install Balanced Set: Specific users/groups can install Security-oriented Set: No users in the organization can install |

| Cowork plugins (Microsoft + partner) | Copilot › Agents › Tools › <Plugin Name> › Block / Unblock | High Utility, High Risk All plugins available org-wide Balanced Block plugins that take write actions in external services Security-oriented Block all plugins |

| See all tenant configurations ↗ | ||

Mapping Use Cases to Controls

A single tenant rarely runs Copilot Cowork under one posture. Executive admins, customer-facing sales, finance and legal, and regulated EU users each need a different combination of plugin scope, Conditional Access, Purview DLP, and SharePoint Advanced Management controls. The matrix below maps representative use cases to the controls needed to ship them safely.

- Plugin allowlist via

M365 Admin Center › Copilot › Agents › Tools › <Plugin Name> › Block / Unblock; block partner plugins that take write actions in external services

- Restricted Access Control (RAC) on sensitive sites:

SharePoint admin center › Policies › Access control › Site-level access control, then per-site Restricted site access - Restricted Content Discovery (RCD) on Copilot-eligible sites that shouldn't surface in tenant-wide grounding:

SharePoint admin center › Sites › Active sites→ site → Settings › Restrict content from Microsoft 365 Copilot - Site Access Reviews on a recurring cadence:

SharePoint admin center › Reports › Data access governance→ Initiate site access review - Auto-labeling policies so sensitivity labels apply automatically to matching content:

Purview portal › Solutions › Information protection › Policies › Auto-labeling policies

- Custom skills (

SKILL.md) must be submitted to an admin for review and approval before use - Prohibit "Don't ask again" on Cowork write actions — send email, post to Teams, schedule meeting, modify/delete files — so the per-action approval gate stays active on every invocation

Deployment Checklist

Use the checklist below as a structured rollout plan: identity and tenant settings, then Purview, then Defender XDR, then SharePoint posture, then residency and ongoing operations.

Compliance Framework Mappings

The article's recommendations, mapped to the NIST AI Risk Management Framework. Use this table as the input to your control narrative or audit evidence package.

NIST AI RMF 1.0

Function | Applies to | Controls |

|---|---|---|

GOVERN | Legal, regulatory, and third-party governance | Frontier program enrollment scope established; Anthropic subprocessor usage and scoped; DPIA and ROPA updated to reflect Cowork data flows and Anthropic processing outside the EU Data Boundary |

MAP | Context, use cases, AI system scope | Use cases defined per role (Knowledge Worker, etc.) and mapped to required Cowork features; plugin and custom-skill supply-chain risks identified before enablement; oversharing baseline reviewed via SharePoint Advanced Management |

MEASURE | Risk assessment and monitoring | Restricted Content Discovery applied per site for content that shouldn't surface in tenant-wide Copilot grounding; recurring Site Access Reviews delegated and run on a schedule; auto-labeling policies in place so sensitivity labels apply automatically to matching content |

MANAGE | Risk treatment and ongoing controls | Posture chosen per setting (Frontier, Anthropic subprocessor, agent availability, plugins) from the configuration reference; per-plugin block/unblock with partner plugins that take external write actions blocked by default; custom skills (SKILL.md) require admin review and approval before use; "Don't ask again" prohibited on Cowork write actions; Restricted Access Control and Block Download applied to sensitive sites |

Copilot Cowork Security FAQ

Security & Risk

Copilot Cowork is safe for enterprise use only when admins explicitly configure it. Out of the box it inherits every Microsoft Graph permission each user already holds — including stale Teams memberships, broken-inheritance SharePoint sites, and "Everyone except external users" sharing links — so existing oversharing becomes the agent's effective attack surface.

Safe deployment requires controls across five planes: agent availability (M365 Admin Center), identity and Conditional Access (Entra), prompt and grounding governance (Purview DLP), audit (Purview Audit), and endpoint posture (Defender).

Until those are in place, treat Copilot Cowork as pilot-only for high-risk groups (executives, finance, legal, regulated data).

Indirect prompt injection. An attacker plants instructions in data Copilot Cowork reads — an email, a Teams message, a calendar invite, a SharePoint document, a plugin response, or a web result — and hijacks the agent to take action on the user's behalf.

Because Copilot Cowork can send email, post to Teams, schedule meetings, and reorganize files, the resulting impact can affect both integrity and confidentiality.

This is compounded by Copilot Cowork's reach: it runs under the user's delegated Entra identity, so every stale Teams membership, broken-inheritance SharePoint site, and "Everyone except external users" sharing link is part of the effective attack surface.

Decision Making

They share a name and both use Claude models, but the products are different.

Claude Cowork is a local desktop agent that runs on the user's machine.

Microsoft Copilot Cowork is a cloud-tenant agent inside Microsoft 365: it works only with the tenant data the user can access via Microsoft Graph. The risk model centers on Graph permission inheritance, plugin and skill supply chain, and the Anthropic subprocessor data path.

Yes — at three independent layers:

(1) Block at the agent layer. Set Copilot Cowork agent availability to "Blocked" in M365 Admin Center › Copilot › Agents › All agents › Copilot Cowork.

(2) Block at the subprocessor layer. Leave the Anthropic subprocessor toggle off under Copilot › Settings › AI providers operating as Microsoft subprocessors.

(3) Block at the program layer. Do not enroll the tenant or admin account in the Frontier program.

Each layer is independent; defense-in-depth uses all three so that re-enabling any one upstream does not silently re-enable Copilot Cowork.

Copilot Cowork is powered primarily by Anthropic's Claude models. Prompts and grounding content leave first-party Microsoft infrastructure to reach Anthropic for inference. Anthropic operates as a Microsoft subprocessor under the Microsoft Product Terms and DPA.

Tenants can scope Anthropic subprocessor access narrowly by user or group via Copilot › Settings › AI providers operating as Microsoft subprocessors.

Configuration

Yes. Microsoft states explicitly that custom skills are not validated by Microsoft: "Custom skills created by users aren't validated by Microsoft. Review custom skill outputs carefully."

Anyone with OneDrive write access to a user — including via OneDrive sync from a compromised endpoint — can plant a skill that becomes persistent agent instructions for every Copilot Cowork session that user runs.

For high-risk groups (executives, finance, legal), require organization-level review before custom skills are enabled.

Plugins from the M365 App Store are validated by Microsoft but still expand Copilot Cowork's tool surface — vet third-party plugins like any other dependency, and block partner plugins that take sensitive write actions in external services.

Custom skills are not validated by Microsoft — require organization-level review before users enable them.